The AI Triangle: The Bottleneck Nobody Priced In

This week, a speculative blog post by Citrini Research predicting that AI would crash the S&P 500 by 57% and push unemployment past 10% by 2028 helped trigger a real sell-off in software and tech stocks. IBM fell 13% — its worst day since 2000. CrowdStrike and Datadog dropped 10-11%. The S&P 500 closed down 1%. The reaction split into two camps: one that treated it as a serious warning about what’s coming, and one asking why we’re reading long-range economic fan fiction as actionable analysis.

That split tells you everything about where we are with AI right now.

The models are getting better, faster, and more precise. That part is real. But the conversation around what this means — for costs, for jobs, for entire industries — has become so polarized that a speculative scenario can move real capital. One side says AI will replace everyone and we should prepare for the worst. The other says the hype is overblown and nothing will change.

I think both sides are wrong. And I think the reason they’re wrong comes down to something most people aren’t paying attention to.

This isn’t hype — but it isn’t magic either

I don’t want to dismiss what’s happening. People in the software engineering world are seeing changes that would have sounded absurd three years ago. 2025 was the year AI agents got good enough to write code with minimal supervision. I’ve seen cases where engineers barely look at the codebase anymore — the agent writes it, the tests pass, and it ships. Three years ago, LLMs produced more bugs than useful code. Now they build features end to end.

And it’s not just software. LLMs are being injected into healthcare, science, transportation, and dozens of other industries. There are genuine breakthroughs — models finding patterns in medical data that trained doctors miss. Everybody is scared and excited about where 2026 will take us.

So here’s what actually happened to me — because I think it’s a preview of what will happen to a lot of industries.

My coding story

When I started using Claude Code for real development work, my direct involvement in writing code dropped fast. I went from writing 100% of the code to maybe 10%. It felt like magic. I was shipping faster than ever.

Then it started creeping back up. Not because the tool got worse — it got better. But I found myself needing to step in and write code to help the LLM understand the global structure. The patterns I had in my head from years of experience — how systems should connect, where the boundaries should be, what will break six months from now — the agent couldn’t see those. It needed me to lay the tracks.

My hands-on coding settled somewhere around 20%. Still a huge drop from 100%. Sounds great, right?

Here’s the part nobody talks about: the ratio flipped. I used to spend most of my time building and a sliver reviewing. Now a huge chunk of my day is reading and checking code I didn’t write — essentially doing PR reviews all day. Every developer knows that PR reviews are the task everyone postpones. Now it’s becoming the job. And it’s not just reviewing for bugs or style issues — I’m checking whether the AI’s changes still fit the architecture, whether the boundaries hold, whether something will break three services away. That requires keeping the whole system in your head, constantly, which is a different kind of mentally exhausting.

The net productivity gain is real. But it’s nowhere near the 10x people promised.

And here’s the uncomfortable part: I’m human. I get lazy. When you’re reviewing output all day instead of creating it, your attention drifts. Things slip through. I’ve caught myself letting questionable code pass that I never would have written myself. The quality floor dropped, not because the AI writes bad code, but because the review burden is exhausting in a way that writing code never was.

It’s not just me noticing this. Russ Cox — former tech lead of Go at Google (Go is a programming language that powers a huge chunk of cloud infrastructure and software on it) — recently wrote to the Go development team about AI tools in their workflow. Two things he said stuck with me:

People brag about codebases of hundreds of thousands of lines that have never been viewed by people, churned out in record time. On closer inspection, these codebases inevitably turn out to be more like dancing elephants than useful engineering artifacts.

The tools make it very easy to turn off your brain, but if you are careful to avoid that trap, you can produce good work.

Dancing elephants. That’s the perfect description for what happens when you let AI generate without oversight. Impressive to look at, not something you’d want to rely on. Cox’s broader point is that AI doesn’t change the fundamentals of software engineering — you still need to think, review, and take responsibility for what ships. No tool relieves you of that.

What this looks like outside software

In software, a bug means a Jira ticket and a hotfix. The stakes are usually low. But in healthcare, transportation, construction — the margin for error is a lot thinner. And the analog of code review there is chart review, second reads, and liability.

Right now, these industries are in the euphoria phase, and for good reason. LLMs are finding cancers that doctors miss. They’re optimizing routes that save fuel and time. The progress is genuine and worth celebrating.

But here’s what I noticed in myself as a software engineer: over time, I started trusting the output more. Reviewing less carefully. Assuming the model got it right because it got it right the last fifty times. The attention that used to be second nature became a conscious effort I had to force myself to maintain.

I suspect the same pattern will show up in other fields too. These industries have stronger oversight cultures than software, so maybe the safeguards hold. But the underlying tendency is universal — we calibrate our effort to the perceived risk, and every correct AI output lowers that perceived risk a little more. That’s worth thinking about.

The triangle: money, time, and effort

I keep coming back to the old project management triangle — you can pick two of three, but never all three. I think it applies to AI, except the three corners are money, time, and effort — by effort I mean ongoing human attention: review, audits, incident response, and accountability.

Money: the infinite loop.

Total spending on AI infrastructure can keep rising even if the cost per unit falls — because usage expands faster than efficiency gains. Hardware depreciates, electricity isn’t getting cheaper, and none of it lasts forever.

But here’s the part that makes it worse: the more problems we solve with AI, the more infrastructure we need. It’s a compounding loop:

- We use AI to solve a problem.

- The solution creates new possibilities — and new problems.

- We throw more AI at those.

- Each round requires more infrastructure. Go back to step 1.

I see this in my own work at a micro level. Claude Code helps me ship features faster. Faster shipping means a bigger codebase. A bigger codebase surfaces more things to build, fix, and optimize. So I use more AI. Which needs more compute. Which costs more.

Every problem you solve with AI creates demand for more AI. The loop doesn’t flatten — it compounds.

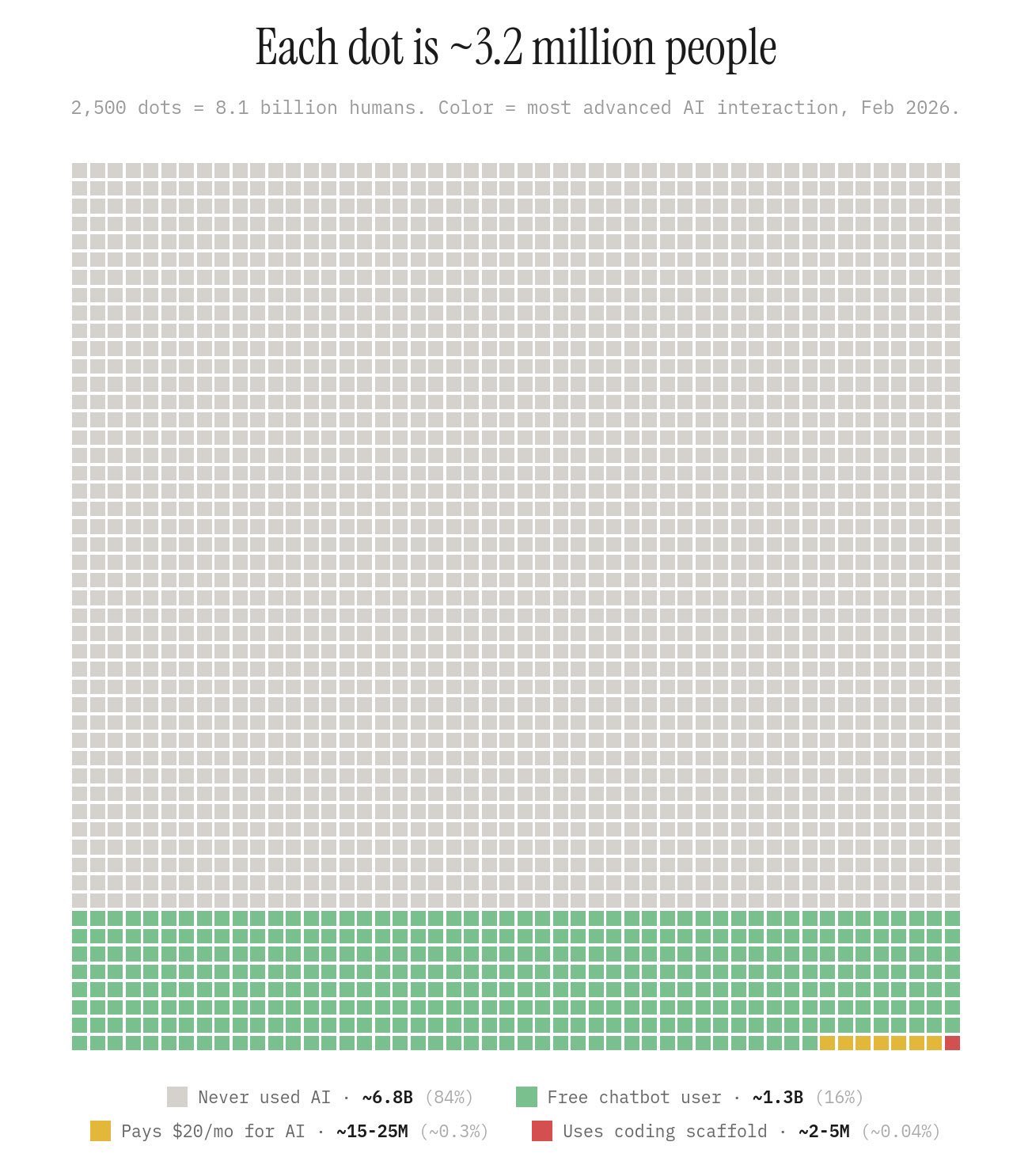

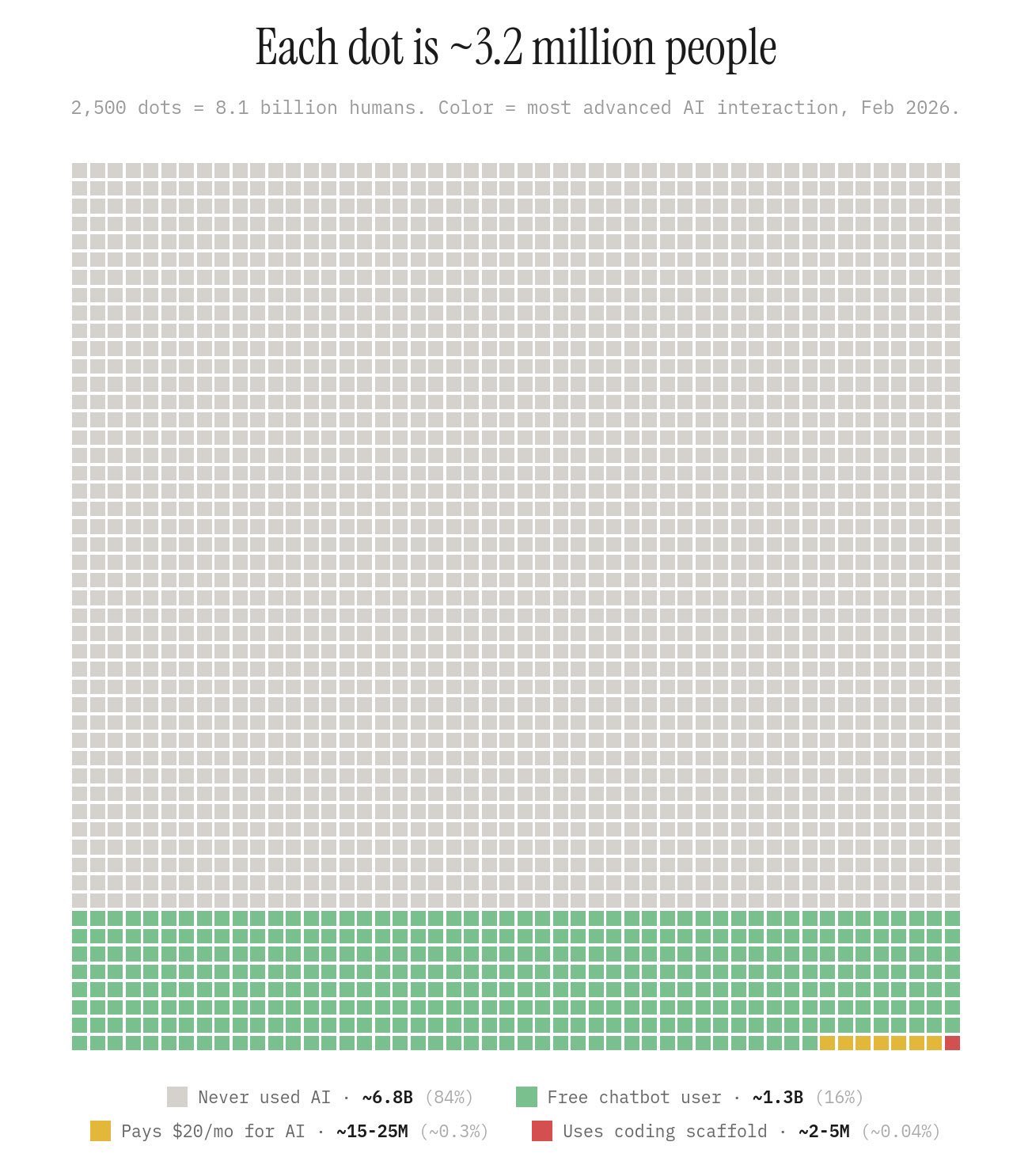

Right now, we’re papering over this with VC money. About 16% of the world’s population has tried or is using AI, but only about 0.3% are actually paying for it.

Meanwhile, according to OECD data, AI firms captured 61% of all global venture capital in 2025 — $258.7 billion out of $427.1 billion — more than doubling AI’s share since 2022. Of that, $109.3 billion went to AI infrastructure alone. The math here is not sustainable. At some point, the real costs have to land somewhere.

Time: efficiency won’t save us.

There’s a common assumption that AI will get more efficient over time — smaller models, better architectures, cheaper inference — and that will solve the cost problem. Maybe. But even if we make it 10x more efficient, we won’t use 10x less of it. We’ll use 100x more.

This isn’t a new pattern. It has a name: the Jevons paradox. When coal engines got more efficient in the 1800s, total coal consumption went up, not down, because efficiency made new uses viable. The same thing is already happening with AI. Every efficiency gain unlocks new use cases, more users, bigger models for harder problems. The demand curve doesn’t bend down — it accelerates.

So even the optimistic scenario — breakthroughs in efficiency, cheaper inference — likely leads to more total infrastructure, not less. The cost per token drops, but the number of tokens explodes.

And then there’s the human side of time. AI does the work in minutes, but a human takes hours to verify it was done correctly. My code review story isn’t unique. It’s the pattern everywhere. The bottleneck shifts from doing to checking, and checking doesn’t get faster just because the doing did.

Effort: the human involvement nobody budgets for.

This is the corner that gets the least attention, and I think it matters the most.

There’s a popular narrative right now that AI will replace most jobs in the next few years — programmers, doctors, lawyers, writers, everyone. In my experience, the reality is different.

I write less code now. That part is real. But my total cognitive load went up, not down. I shifted from creating to verifying, and verifying someone else’s work — even an AI’s — is a different kind of exhausting. It’s less engaging, more tedious, and harder to sustain over long periods. AI didn’t remove the need for me. It changed what I do, and the new version of my job requires more attention, not less.

The “AI replaces everyone” story assumes you can take humans out of the loop entirely. But in high-stakes systems, someone has to own the outcome — often a human, sometimes a process with audits and liability. What actually happens is that the human role shifts from doing to overseeing — and overseeing at scale is its own full-time job that nobody budgeted for.

The triangle tension is this: you can’t optimize all three corners.

- Cheaper AI → slower or less accurate → more human verification needed

- Faster AI → more expensive → eventually someone has to pay

- Less human involvement → more risk → harder to catch when something goes wrong

Where this lands

LLMs are tools. Good tools. The best tools I’ve used in 20 years of building software.

But the bottleneck was never generation — it was verification. And nobody priced that in. Not the VCs writing checks, not the companies adopting AI, not the engineers excited about shipping faster.

The future of AI isn’t “everything is automated.” It’s “everything is generated — and everything needs to be checked.” That’s a real shift in how work gets done, but it’s not the revolution that justifies hundreds of billions in infrastructure spending.

The companies that will get real value from AI are the ones that budget for oversight the same way they budget for compute. The ones chasing “remove all humans from the loop” will hit a wall — not because the technology failed, but because they forgot that someone still has to own the outcome.

AI made my work better. It also made it different in ways I didn’t expect and nobody warned me about. I think that’s the honest version of this story, and the sooner we stop pretending otherwise, the sooner we can build something that actually lasts.